Understanding Live Video Streaming Software: Your LiveSwitch Resource

As software developers, we know how frustrating it can be to be boxed into using cookie-cutter video conferencing apps and developing with inadequate APIs. So when designing LiveSwitch, we decided to depart from the status quo and give teams something truly versatile to build perfect user experiences tailored to their exact specifications.

What is LiveSwitch?

LiveSwitch is an enterprise-grade flexible live video streaming platform. It is available in two unique offerings: as a fully managed cloud video platform or as an on-premise server stack installable on the server infrastructure of your choice. LiveSwitch Cloud and LiveSwitch WebRTC Server share the same API, so you can get started quickly on one and transition effortlessly to the other.

But why did we name it LiveSwitch?

One word: Switchiness, pronounced: switch-e-ness. Is that even a word? We think it should be, so we designed it to be able to:

- switch modes,

- switch session size/scale/geolocation,

- switch platform,

- switch codecs,

- switch between simulcast streams,

- switch bandwidth usage,

- switch video sources/sinks,

- switch on/off actions in your external systems.

That is a lot of switching… so we think Switchiness is a great word for it. Of course, we could have just used the word flexible. Oh, and the Live part? Well, it can do all of these things live in real-time. Let’s look at these elements of switchiness a little closer.

Switchiness Factor 1: Hybrid Architecture

LiveSwitch can dynamically ‘switch’ between the three industry-accepted live video conferencing architecture types to ensure all participants connect in the mode that best matches their device type, network bandwidth, and session size.

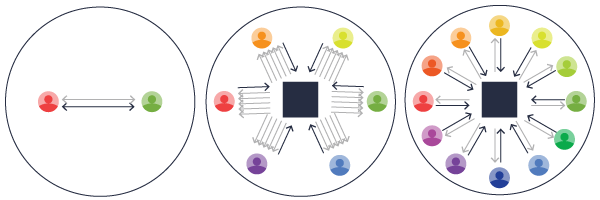

There are three industry-accepted architecture types (aka topologies) used in the world of video conferencing. Each type has its benefits and drawbacks. The topologies are:

- Peer-to-Peer Conferencing (Mesh)

- Selective Forwarding (Routing)

- Multipoint Control Unit (Mixing)

LiveSwitch is unique within the video conferencing industry because it introduces a fourth concept: hybrid topology. Let’s dive deeper into each of these:

What is Peer-to-Peer (Mesh)?

In peer-to-peer, every participant must upload their video stream to every participant and download a stream from every participant -- without the use of a media server. While this type of connection is perfect for a two-party conference and provides the lowest operating cost, it tends to break down quickly as conferences grow in number of participants. This is particularly troublesome for older devices and participants with low bandwidth networks.

![]()

Additionally, in this mode, functionality such as recording or PSTN or VOIP telephony integration have to take place on the end user’s devices, or may not even be possible at all, increasing time to market and support requirements since implementations must be re-built on a per-platform basis.

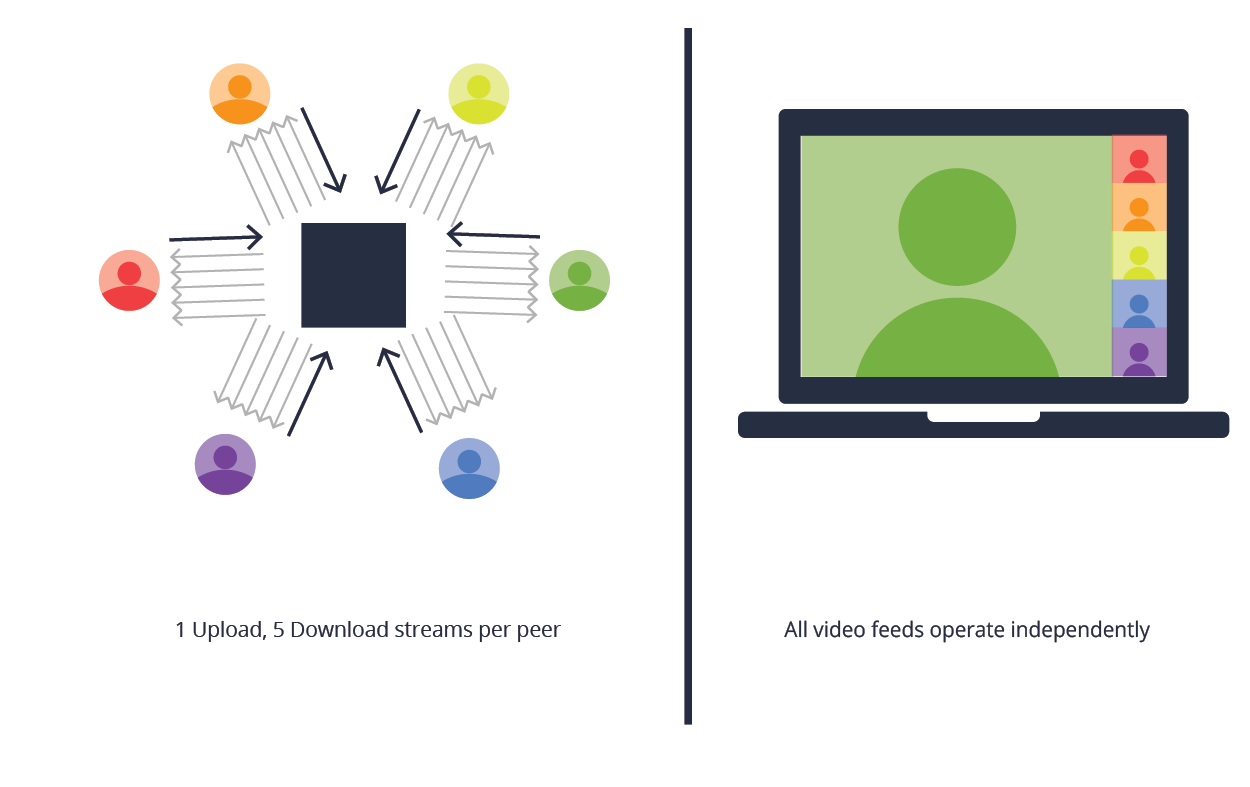

What is a Selective Forwarding Topology (Routing)?

In a Selective Forwarding Unit (SFU) topology, a SFU server is added into the conference. Each participant uploads their encoded video stream to a server and the SFU then forwards those streams to each of the other participants.

This topology is much more scalable for your clients as it shifts some of the CPU load from the user/client onto the server. This works well with the asymmetric nature of most internet connections by requiring each client to upload and encrypt only once. This reduces the participant upload bandwidth needed and helps to alleviate pressure on the device itself, especially on mobile.

One of the big advantages forwarding has over a mesh architecture is that it can employ various scaling techniques. Because it acts as a proxy between the sender and receiver, it can monitor bandwidth capabilities of each and selectively apply temporal (frame-rate) and spatial (resolution) scaling to the packet stream as it moves through the server.

While forwarding requires additional server infrastructure (the SFUs themselves), it is highly efficient. An SFU doesn’t attempt to depacketize the stream (unless recording is activated) or decode the data.

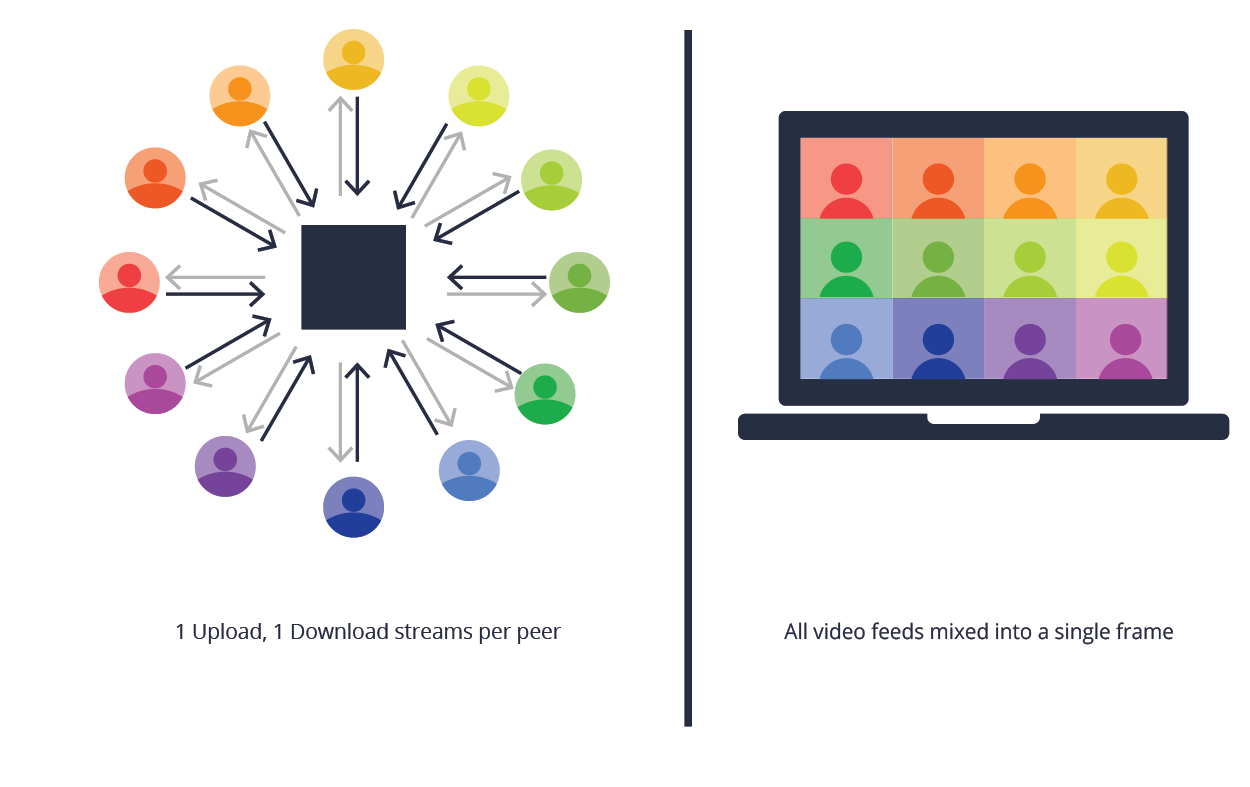

What is a Multi-Point Control Topology (Mixing)?

While the SFU is sufficient in many use cases, if the conversation grows beyond a certain point or if any of the participants are using underpowered devices, then a different topology is needed.

A Multipoint Control Unit allows your participants to upload individual encrypted video streams to a server. The server then mixes the incoming streams from each participant into a single stream and forwards them as a single feedback to each individual client. This means that every participant will only have to manage a single bidirectional connection, regardless of the number of clients present.

This is the most efficient approach for the client but the least efficient for the server. The server is responsible for all of the depacketizing, decoding, mixing, encoding, and packetizing. LiveSwitch allows you to optimize this pipeline, but it still requires more server resources to complete in real-time than forwarding.

This is the most efficient approach for the client but the least efficient for the server. The server is responsible for all of the depacketizing, decoding, mixing, encoding, and packetizing. LiveSwitch allows you to optimize this pipeline, but it still requires more server resources to complete in real-time than forwarding.

As with forwarding, mixing can take advantage of scaling techniques. By applying temporal (frame-rate) and spatial (resolution) scaling to the output of the audio and/or video mixer(s), it can adapt quickly to changing network conditions of individual clients, keeping the quality as high as possible for all participants in the session.

What is a Hybrid Topology?

LiveSwitch is unique in the world of video conferencing because it enables a dynamic hybrid topology.

Hybrid topologies are, as their name implies, a combination of mesh, forwarding, and/or mixing. This allows you to always have the most efficient, cost-effective, and best quality connection for your participants.

In a hybrid environment, participants can join a session based on whatever makes the most sense for the session or even for each individual participant. For simple two-party calls, a mesh setup is simple and requires minimal server resources. For small group sessions, broadcasts, and live events, forwarding may better meet your needs. For larger group sessions or telephony integrations, mixing is often the only practical option.

LiveSwitch is able to employ all three topology types within the same video call to create the best possible video call for all users and can even switch topologies dynamically during the call to accommodate changing session sizes, client device limitations, or network bandwidth availability.

A Hybrid topology is truly the best of all worlds and provides the most flexibility for your application.

Switchiness Factor 2: Scaling & Geo-distribution

LiveSwitch can easily ‘switch’ from a small-scale conference to a large-scale broadcast while maintaining the best quality video feed for all participants.

One key benefit of LiveSwitch’s hybrid topology is that it allows you to scale from small-scale conferences to large-scale broadcasts instantly without affecting video quality for the participants.

But the hybrid architecture is not the only reason that LiveSwitch is so scalable. LiveSwitch’s regionality (or server geo-distribution) also lends itself to high-quality connections. Media servers in one region can cluster with media servers in another region over high-speed backbone networks. The regional distribution of servers is one of the ways that we can ensure low latency connections between clients and servers and to ensure that you maintain the best user experience.

Switchiness Factor 3: Cross Platform

LiveSwitch ‘switches’ between most platforms with one powerful API.

One key advantage of LiveSwitch is that it supports most platforms, browsers and devices on the market today. Our client-side software development kit (SDK) offers you and your development team ultimate flexibility when designing and building your applications.

With a single cross-platform API that is virtually 1:1 identical on every single platform, you can save time by building for one platform and then re-using all of your knowledge and learning on the next platform. This greatly decreases the time to market. Further, because LiveSwitch supports so many platforms, including augmented and virtual reality platforms, you never have to worry about the future capabilities of your application as markets change and evolve.

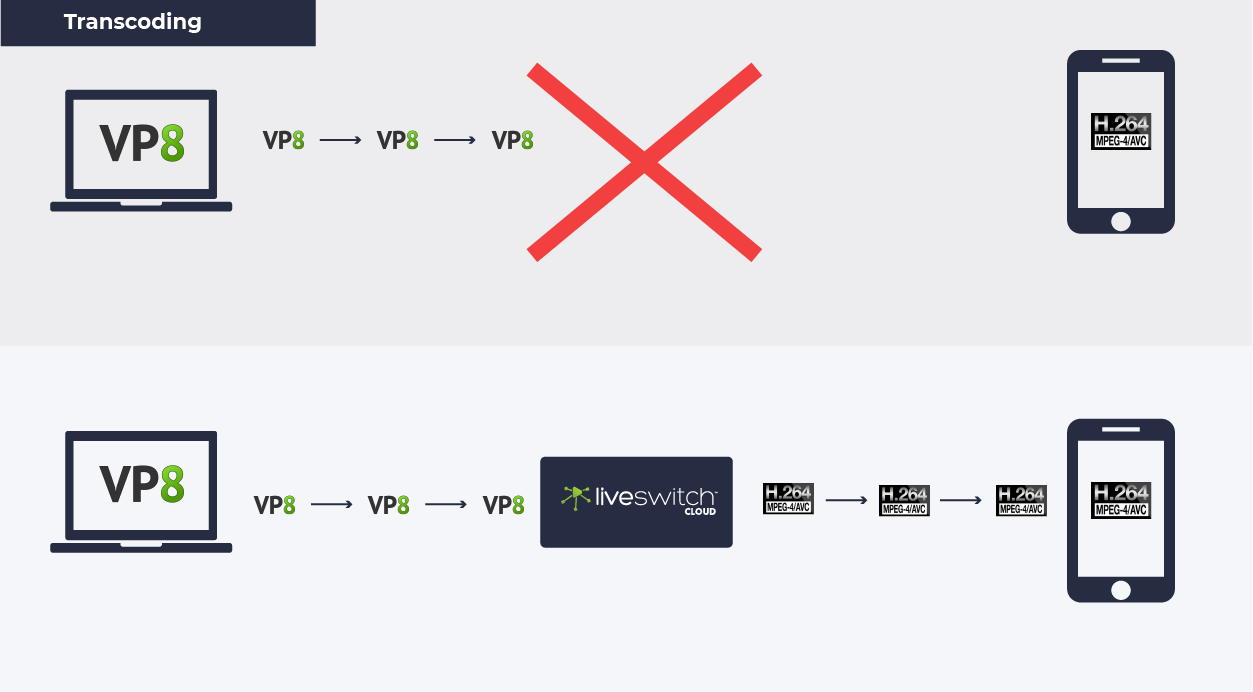

Switchiness Factor 4: Intelligent Transcoding

LiveSwitch can ‘switch’ between audio and video codecs so that two devices can communicate with each other.

In order for devices to communicate with each other, they need to be able to read and understand the messages that are being sent and received. In the world of video conferencing, different device types often encode audio and video in different formats creating barriers for cross-platform communication. For example, Google Chrome on Android doesn't support H.264 and until recently web browsers on iOS did not support VP8 encode/decode. With LiveSwitch, we’ve tackled this problem with something we like to call intelligent transcoding.

Transcoding is the process that converts content from one format to another, to make audio and video viewable across different platforms and devices. A ‘codec’ - which is a word derived from the terms code and decode - is used to compress and decompress content to reduce the network bandwidth needed to transfer the content.

The LiveSwitch media server uses intelligent transcoding to act as a translation service between codecs allowing all devices to communicate seamlessly with each other.

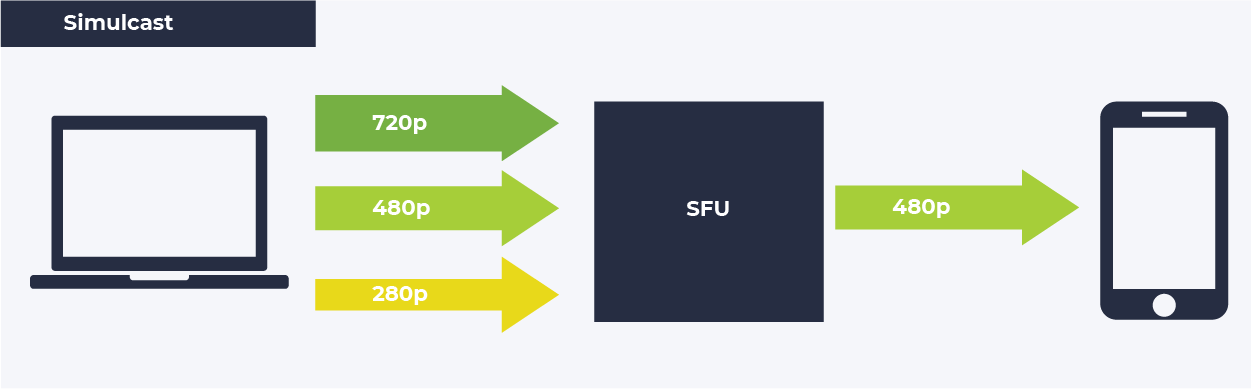

Switchiness Factor 5: Simulcast

LiveSwitch can ‘switch’ between simulcast video streams during broadcasts to provide the best user experience for the audience.

The LiveSwitch media server is always working behind the scenes to ensure that all participants in a broadcast are receiving the highest quality video stream available for their unique circumstance. One way in which this is achieved is through the use of simulcast.

Simulcast is a method where one user sends multiple video streams to a SFU, each with the same media content but with different resolutions and bitrates.

The SFU can then decide which video stream to send to each of the participants in the broadcast based on the capabilities of the device and the network that each audience member is joining from. This means that someone on a fast network can receive a high-quality stream, while someone on a slower network can have a lower-quality one. Without simulcast, the clients with slow networks would either experience dropped frames and video packet loss or the server would need to instruct all broadcasting client devices to reduce their encoded video stream resolution/quality to accommodate the lowest common denominator of all connected participants.

Sending multiple video streams requires more bandwidth from the broadcaster, but it also provides the best possible user experience for each person connected to the simulcast. LiveSwitch’s simulcast will intelligently turn off any of the broadcasting client’s encoding that is not being consumed by the sessions so that the broadcaster saves bandwidth and does not use more CPU power than is needed.

As an additional bonus, if the simulcast is using more CPU than the broadcaster can sustain, LiveSwitch will incrementally deactivate encodings to free up resources to allow the session to continue uninterrupted. With ultimate flexibility in mind, this can also be controlled manually to provide maximum configurability in your simulcasts. For example, you can configure LiveSwitch to automatically turn off certain streams if the broadcaster is connecting on a mobile network (which are often inherently unreliable), so that you can always ensure that you are creating the best possible broadcast.

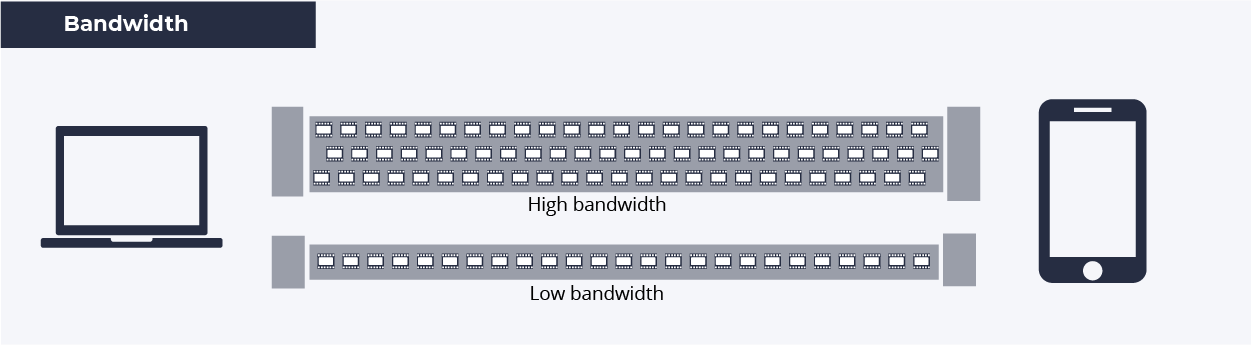

Switchiness Factor 6: Bandwidth Adaptation

LiveSwitch can ‘switch’ (aka adapt) bandwidth usage to limit packet loss and lag.

To ensure the best possible user experience, LiveSwitch uses a process called bandwidth adaptation to limit packet loss and delay.

In simplest terms, bandwidth defines the maximum rate at which data can be transferred between two endpoints. You can imagine it as a pipeline connecting a water source to a receiving tank. If you have a large pipe, you will have the capacity to transfer large amounts of water (or in this case, data packets) with little trouble. If your pipeline is too small, you will not be able to stuff the water into the pipe fast enough and data will need to queue up before entering the pipe - eventually being discarded if too much time has elapsed. You may have an extremely large pipe (aka fast connection) leaving your workplace, but that won’t matter much if the receiving client is connected to a slow WiFi hotspot or corporate VPN with less than an Mbps of bandwidth available.

That is where bandwidth adaptation comes in. The goal of bandwidth adaptation is to limit packet loss and delay. This is done by adjusting the water flow rate (aka bitrate) to a level that is sustainable for all parties. That level is constantly changing based on what is happening on the network, so LiveSwitch constantly and automatically provides feedback to each client which is used to adjust their sending bitrate to get the best quality experience for each participant.

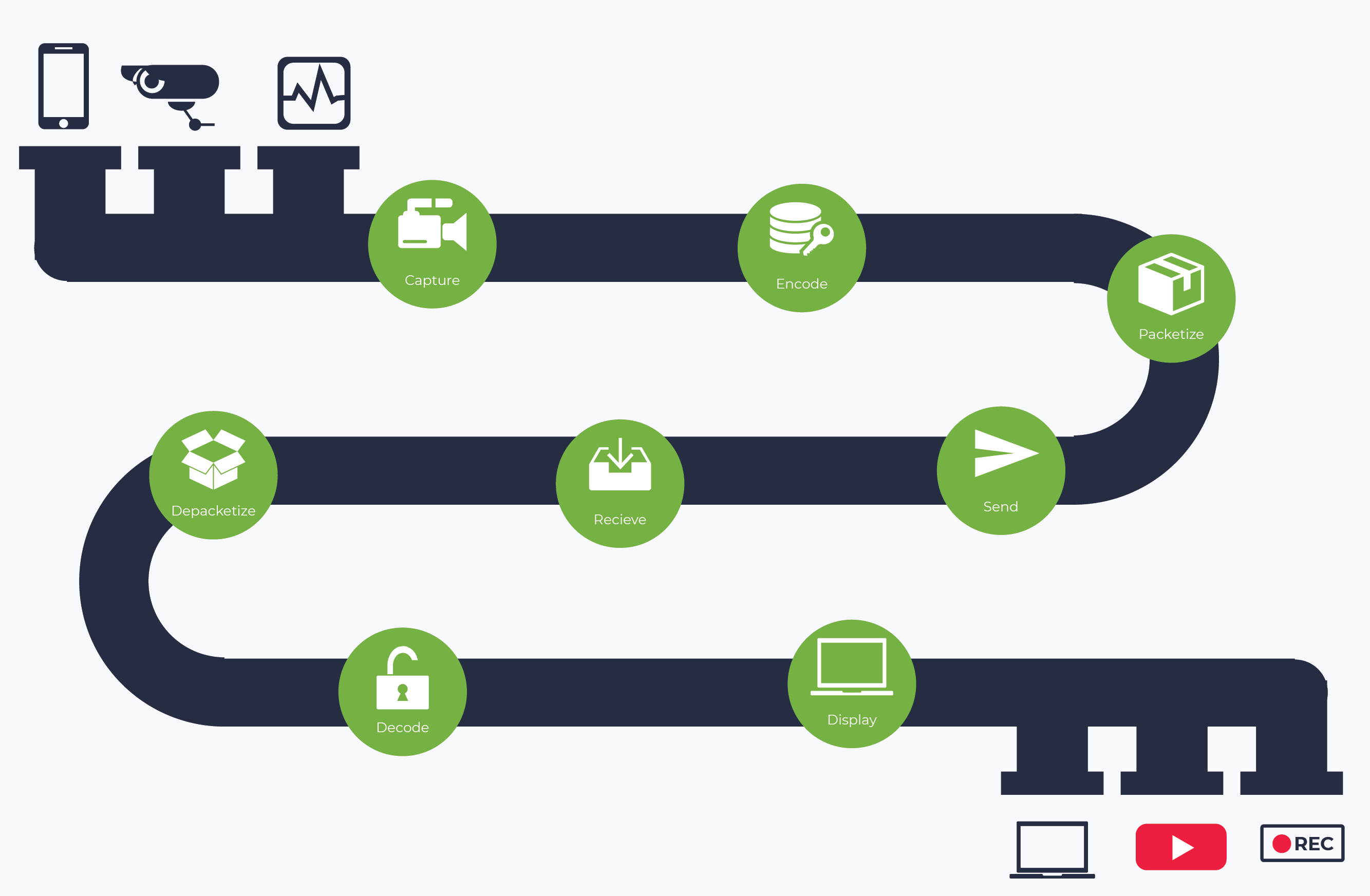

Switchiness Factor 7: Media Pipeline Access for Sources and Sinks

With LiveSwitch, developers can ‘switch’ to/from their choice of audio or video sources and sinks.

Today, internet-connected cameras are found everywhere. Whether it is the cameras in our smartphones, the webcams in our laptops, or even the cameras in augmented/virtual reality gear, they are all potential live video sources for conferencing and streaming. Add other sources, such as pre-recorded video or other providers’ open live streams, and you have a huge assortment of places you can get video content for your streaming and conferencing.

Similarly, the options for viewing live video and saving it for future purposes are also expanding. Live video is viewable on our Smart TVs, web browsers, iOS/Android phone apps, streaming boxes, and virtually anything else with a screen and an internet connection. Additionally, live video conferences and streams are commonly recorded and archived for operational, legal, and analytical reasons to add value for both businesses and the end user. The data that is pulled in (or sourced) can be received by many different and injected at many different points along the media pipeline, and LiveSwitch allows the flexibility for you to choose how this is handled.

With the suite of LiveSwitch client-side SDKs, we’ve given developers unprecedented API access to the entire media pipeline so that they can define multiple sources and sinks and switch them in real-time as their application needs. Need to inject video from a secondary source during a live session? No problem. Need to direct multiple video streams to a post-processing video mixer and recorder? Can do that too. We quickly realized that developers don’t want to have their creativity hampered by the tools they use, so with LiveSwitch we’ve opened up the media pipeline in such a way that they can build their application without limits.

Switchiness Factor 8: External Webhooks

LiveSwitch can extend beyond just simple video conferencing by ‘switching’ how your application interacts with external systems through using webhooks.

OK yes, this may be stretching the switchiness narrative by attempting to align the LiveSwitch product name with the amazing flexibility that its built-in webhooks provides - but we couldn’t honestly write this article without highlighting this incredibly useful feature. With LiveSwitch Webhooks, your LiveSwitch powered application can interact with virtually any endpoint on the internet.

Using predefined, fully-configurable conditions, webhooks can be used to trigger external actions upon a wide range of events that commonly occur within video conferencing sessions. With the LiveSwitch Webhooks feature, you can do things such as notifying an external system when a user registers or unregisters from the LiveSwitch gateway which is often useful for determining online presence in a virtual waiting room. Or webhooks can also be used to do things like notify an external recording system to begin receiving video at the beginning of a session and trigger post-processing and analysis of the video after the session. The sky is truly the limit for the things that can be done with the broad set of configurable events LiveSwitch exposes to the developer.

Wrapping it all Up

Our mission to deliver maximum switchiness has been wholly realized and is available today in LiveSwitch. From its hybrid architecture and geo-distribution to its ability to adapt through transcoding, simulcast, and bandwidth adaptation, to its open media pipeline and webhook extensibility, LiveSwitch represents the ultimate choice for flexible and high-quality live video.

So Which LiveSwitch is right for you?

LiveSwitch Cloud is the right choice for you if you need to get started quickly, do not have a dev-ops team, or do not wish to manage your own server infrastructure. It is also a great choice for anyone looking for detailed session analytics and real-time telemetry.

LiveSwitch Server is perfect for anyone who wants more control over their server infrastructure location, provider, and scaling. It can be deployed on the server infrastructure supplier of your choice, whether that be AWS, Azure, Google Cloud, Oracle Cloud, or virtually any other Linux/Windows box. It is also available now as a one-click installation in the Oracle Cloud Marketplace.