The Complete Guide to Scaling Event Platforms with Multipoint Control Units (MCUs)

.png)

.png?width=2881&name=The%20Complete%20Guide%20to%20Scaling%20Event%20Platforms%20with%20Multipoint%20Control%20Units%20(MCUs).png)

The virtual events industry shows signs of tremendous growth this year. And with the transformative shift from in-person crowd events to virtual hybrid gatherings, it is all the more critical for platform providers to ensure their solutions can scale to a global audience while minimizing performance bottlenecks commonly found in traditional video conferencing platforms.

We put together this guide for software developers who are building event platform applications. With LiveSwitch Cloud’s flexible hybrid topologies, platforms can now meet global demand with live video architecture that effortlessly scales in real-time. One of these topologies, the Multipoint Control Unit (MCU), is available within the LiveSwitch Cloud platform. Teams can use it to build optimized platforms, eliminate video and audio latency, and integrate live video with traditional broadcasting sources and sinks for dynamic remote fan viewing experiences.

Keep Reading To Learn How To:

- Scale event platforms with MCUs

- Set up MCUs in LiveSwitch Cloud

- Customize MCU layouts at the application layer

- Launch successful MCU event platforms

- Deliver scalable event platforms with best practices

A Brief Introduction to Multipoint Control Units (MCU)

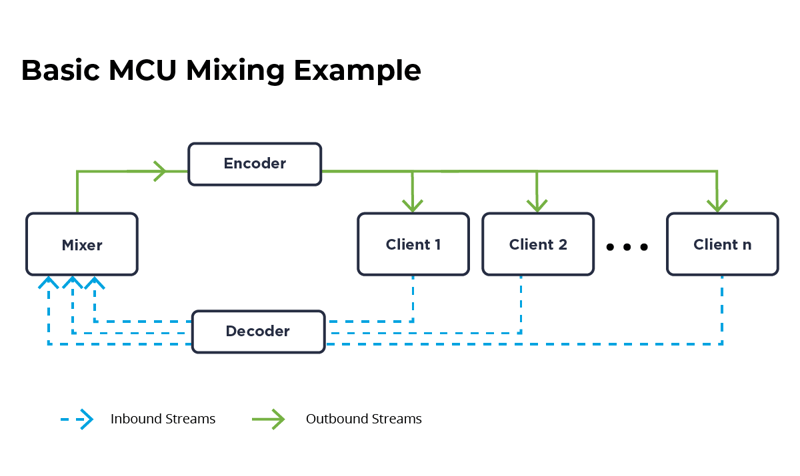

A software-based multipoint control unit (MCU) is a mixer capable of combining and composing multiple inbound media streams into a single outbound media stream. In other words, an MCU mixes and transcodes video feeds, and also renders optimal video and audio codecs for participants joining from their client device. The MCU is critical technology often found at the core of video streaming platforms that display large groups of participants on a screen beyond the typical 3x3 grid.

Streaming platforms apply MCU connections to optimize event platforms and display feeds in clustered layouts. This technology ensures that platform providers can host participant numbers into the thousands - at any given moment. For the participants joining an MCU-enabled broadcast, receiving and sending real-time feeds is possible with optimal layouts designed for ultimate real-time interactivity.

Applications of Multipoint Control Units: From Telephony to Event Platforms

The Multipoint Control Unit technology has been around for a while. Previously, SIP and H.323 providers used MCUs to connect conferencing participants dialling in from their phones to a video meeting. For this to happen, a single, bi-directional audio and video feed has to pass through the phone call to the Media Server. Then, the feed is transcoded into a mixed feed. Finally, the mixed feed is broadcasted to other client devices connected to the platform.

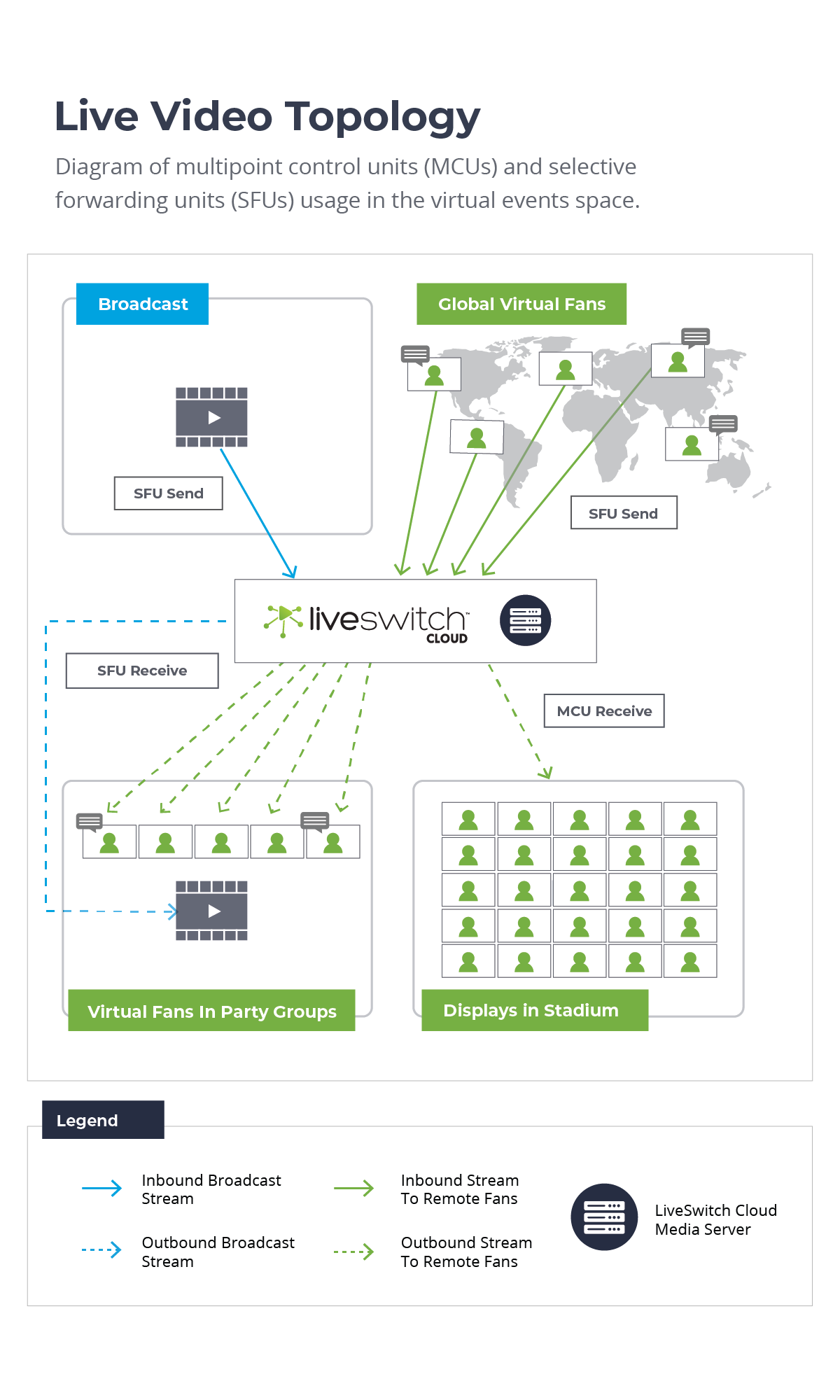

Today, MCUs have found their place in the virtual events space, especially when they are paired with Selective Forwarding Units (SFUs). With the hybrid topologies, relaying bi-directional AV feeds from the audience of an event platform to the production screens inside a studio is possible. At the same time, while this is happening, an SFU feed can connect the broadcast from the venue to a remote audience of thousands of viewers. This concept is illustrated in the diagram below. The result from this dynamic interplay of MCU and SFU topologies is that event producers can continuously delight fans, reach thousands of participants around the world, and do it all with impressively low latency live video streaming.

Evaluating Multipoint Control Units for a Virtual Event Platform Environment

Live video developers familiar with WebRTC technologies and streaming APIs know that Multipoint Control Units are the key behind providing scalable video streaming experiences into the thousands.

One common criticism about MCUs is the intensive CPU processing that occurs in real-time. Because an MCU shifts the decoding, mixing, and encoding of video streams from the participant’s client device to the Media Server, event platforms can find simply relying on MCUs disadvantageous and suboptimal. However, if a platform provider desires to deliver optimal streams to under-powered client devices, an MCU's benefits often outweigh the consequences.

We are aware of the criticisms of using MCUs to power event platforms. To address this issue, LiveSwitch Cloud developers can pair Multipoint Control Units with Selective Forwarding Units using our flexible live video API and platform. Developers using LiveSwitch Cloud can leverage its flexibility to shape the future of virtual event technology - in the way that works best for their operations.

|

|

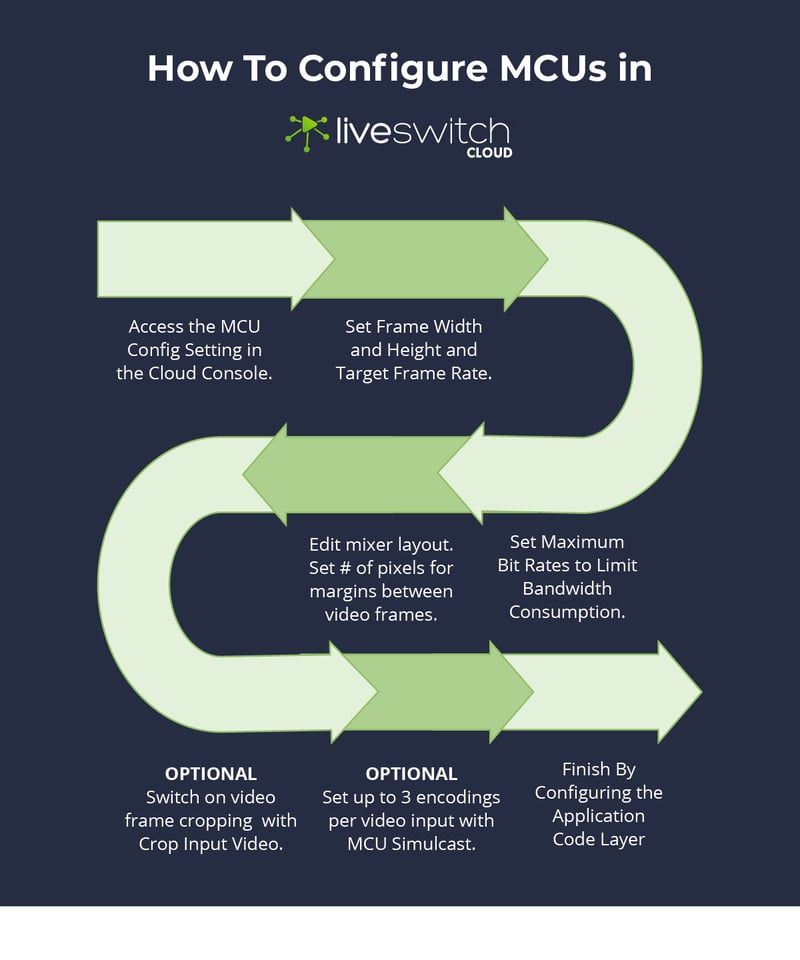

How To Configure LiveSwitch Cloud MCUs

Developers building platforms with LiveSwitch Cloud are in for a real treat. With the Multipoint Control Unit (MCU) configuration feature inside LiveSwitch Cloud, developers can manage how MCUs are used. The MCU feature can be configured in four main steps with two further optional settings that make flexible streaming possible. Once the MCU settings have been set from within the Console, developers can then dive into the application code layer to customize the layout and appearance of the MCU.

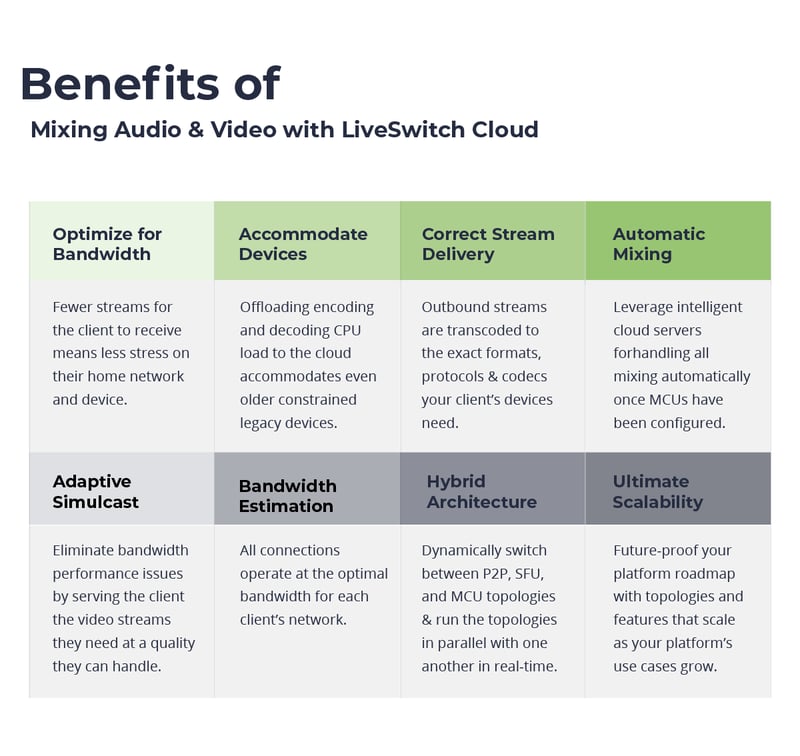

How To Display SFU & MCU Connections and Render Flexible Layouts

As LiveSwitch developers might have noticed, the configuration modules for P2P, SFU, and MCU inside the Cloud console mean only one thing: their platforms can output combined video layouts that render multiple connection types in a single session. Mixing video and audio feeds with LiveSwitch Cloud provides platforms with a host of benefits. Streams can be intelligently optimized for changes in bandwidth and network conditions, reach clients joining from underpowered devices, and render the best video and audio codecs for each individual end user. This is truly a game-changer - particularly for virtual events with multiple video sources and sinks. Displaying MCU streams for one source and an SFU bi-directional feed for a sink is now possible.

var audioStream = new FM.LiveSwitch.AudioStream(localMedia, remoteMedia);

var videoStream = new FM.LiveSwitch.VideoStream(localMedia, remoteMedia);

// Create a bidirectional MCU connection that is both send and receive.

var connection = channel.CreateMcuConnection(audioStream, videoStream);

layoutManager.AddRemoteView(remoteMedia.Id, remoteMedia.View);

// Create an upstream SFU connection that sends my audio video data.

var connection = channel.CreateSfuUpstreamConnection(audioStream, videoStream);

//For every participant upstream connection I want to ingest, I also need to open a receive downstream SFU connection.

channel.OnRemoteUpstreamConnectionOpen += (remoteConnectionInfo) =>

{

...

var remoteMedia = new RemoteMedia();

layoutManager.AddRemoteView(remoteMedia.Id, remoteMedia.View);

var connection = channel.CreateSfuDownstreamConnection(remoteConnectionInfo, audioStream, videoStream);

...

};

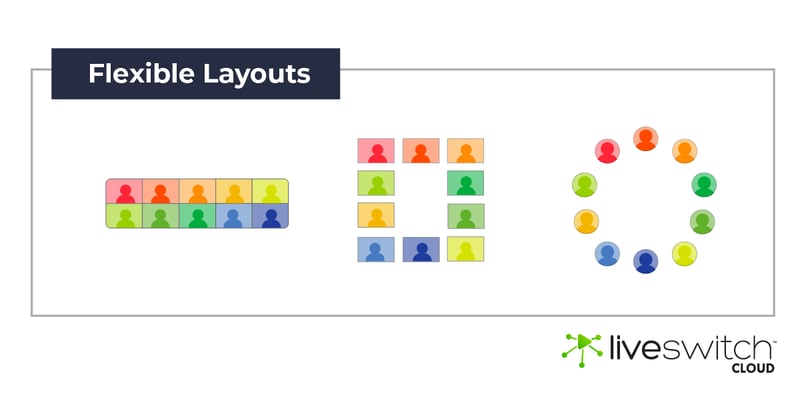

Customize MCU Remote Viewer Layouts

LiveSwitch Cloud's LayoutManager class can be used to customize the video layouts seen by the remote viewer. Custom elements including labels and buttons can be added to the remote video view for real-time interactivity. Some popular interactions event platforms have added to their MCU layouts include hand-raising, crowd cheering, and live polling. Developers achieve this by using LayoutManager, SetLocalView and AddRemoteView. It's a new level of customization that we place in their hands. With it, developers can implement virtually any layout to recreate in-person event experiences for their customers.

Virtual Events with LiveSwitch Cloud

Sports and entertainment, major business industry conferences, and virtual event platforms have all used MCUs as part of their large-scale video platforms. LiveSwitch Cloud's MCU feature is highly flexible and can be used for a number of different use cases in the virtual events space. Developers can enjoy the ability to customize live video and scale video platforms to reach a bigger audience.

One appealing factor in mixing participant video and audio feeds into a single screen is the ability to monitor participant interactions and behaviors. MCUs are capable of displaying several video feeds concurrently on production control room screens at an optimal resolution and frame-rates for event staff to use to moderate the behaviors of their streaming participants.

This optimized resolution and frame-rate only applies to the moderators' view; the monitored participants will still receive the highest-quality feeds at the bitrates their devices and networks can handle. At any point in time, the quality of the monitor feed can be adjusted with an instant response that is not dependent on any extra bandwidth being available at either end of the broadcast.

Bringing It Together: Best Practices For Scaling Virtual Event Platforms

Practice #1: Use a Live Video Architecture With Hybrid Switching

In the world of virtual productions where streams often include 400+ audiences split across a video conferencing grid, a scalable video architecture ensures productions can accommodate all numbers of users. With LiveSwitch’s hybridization of peer-to-peer (P2P), forwarding (SFU), and mixing (MCU) video topologies, video feeds can scale optimally based on the number of participants. This hybrid architecture empowers developers to build dynamic, scalable applications and reach an unlimited number of participants.

Practice #2: Add MCUs to your platform architecture.

MCUs allow virtual event platforms to broadcast streams from small watch groups to thousands of remote watchers. When virtual platforms want to establish real-time bi-directional AV feeds, they are often talking about using the MCU video topology. This technology, when bundled with an advanced live video tech stack such as LiveSwitch, ensures developers can control operating expenses for cost-effective streaming. Essentially, participants who connect to a virtual platform powered by LiveSwitch will always receive the best quality feed for their device and network conditions.

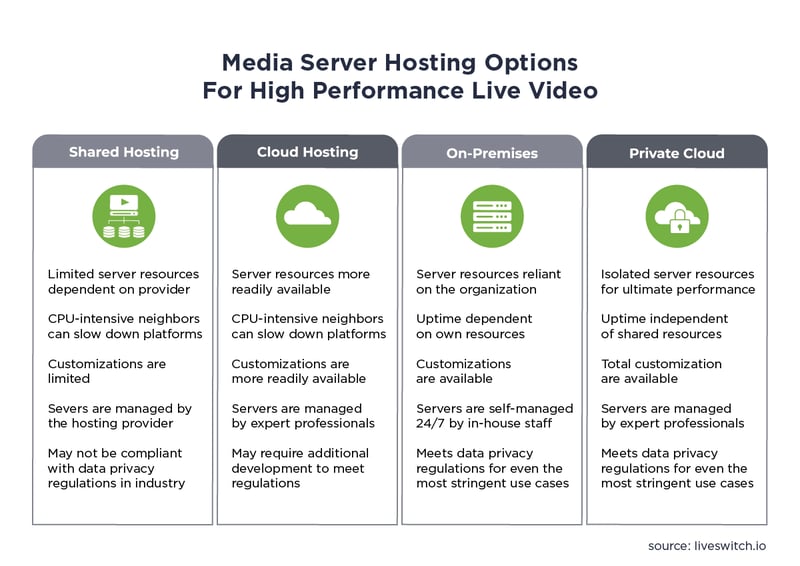

Practice #3: Choose a Right Server Hosting Solution

Earlier, we discussed how MCUs offload bandwidth required to mix, decode, and encode live video onto the platform’s Media Servers. Because MCUs are CPU intensive, selecting the right server host for your platform is an important decision to make. The right server infrastructure ensures platform stability so that when thousands of participants join a virtual production, Media Servers can handle the added connection requests. Teams building with LiveSwitch can choose from shared, cloud, on-premises, and private cloud infrastructure to meet their exact requirements.

Practice #4: Move Projects Forward With Support

Finally, when virtual platforms decide on a live video tech stack to power their event platform, teams should decide what support grade is important to their roadmap. For example, the LiveSwitch Cloud platform is a powerful, flexible solution for the virtual events and event production space. Its inherent flexibility often leads development teams to work with our Professional Services team to climb the learning curve and shorten development timelines. In fact, we believe one factor that helped our virtual event clients succeed is because they understood the value of working with a team of live video experts.

Dream up your platform and let us help make it a reality. Contact our team of experts and ask us how we can help you build or scale the perfect virtual event production to a global audience with LiveSwitch Cloud. Whether you require a project manager, senior developers or an entire team to scale and run platforms smoothly 24/7, our team is here to help.